We, humans, are messy, illogical creatures who like to imagine we’re in control—but we blithely let our biases lead us astray. In Design for Cognitive Bias, Author, David Dylan Thomas lays bare the irrational forces that shape our everyday decisions and, inevitably, inform the experiences we craft. Once we grasp the logic powering these forces, we stand a fighting chance of confronting them, tempering them, and even harnessing them for good. Come along on a whirlwind tour of the cognitive biases that encroach on our lives and our work, and learn to start designing more consciously!

Website – https://www.daviddylanthomas.com/

Full Transcript

Speaker 2 (00:18):

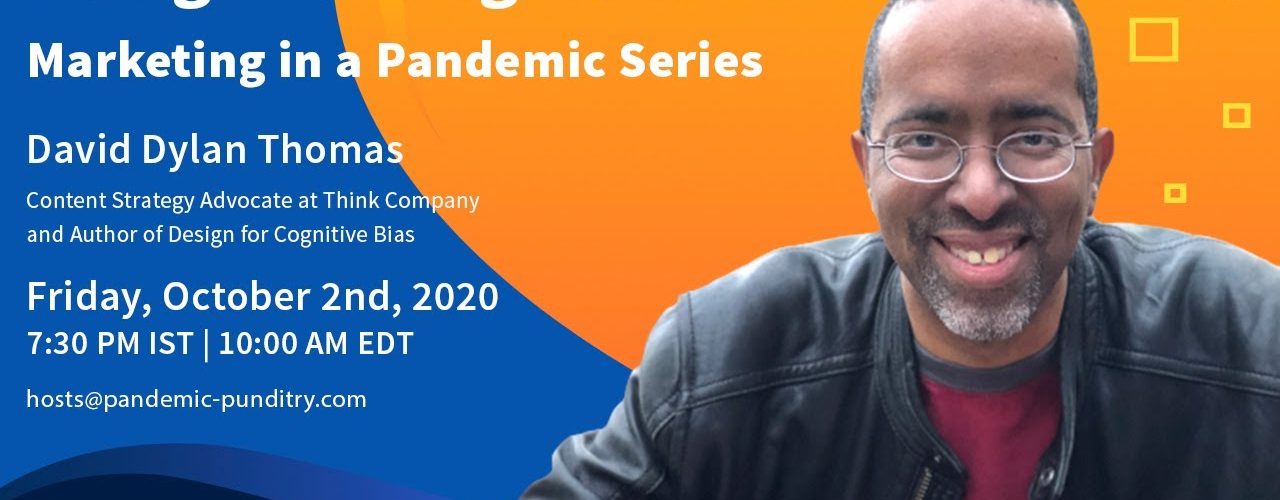

Okay. I think we’re officially ready to go. Good morning. Good afternoon. Good evening. Wherever you’re joining us from around the world today we are thrilled and honored to have with us David Dylan, Thomas. He is the content strategy advocate for the thing company, and more importantly today for our topic. He’s also the author of design for cognitive bias. He actually wrote the book. So that’s what we’re going to talk about today, about designing for, with cognitive bias in mind. So we’re really honored to have you with us, David. Dave, I’m going to call your day by preference with us today. Welcome to pandemic boundaries is marketing a pandemic series. Thank you so much for happening. Pleasure to be here. Thanks. And I just have to warn the audience that, you know, I’ve, I’m on some serious pain medication because I dislocated my shoulder.

Speaker 2 (01:11):

So if I’m not as clear as I normally am, that’s probably, that’s probably the reason. So so just for the benefit of the audience, and I think, you know, people maybe sort of the, the term cognitive bias, maybe something that, you know, people sort of can guess that, or it’s probably self-explanatory to some extent, but I think it’s, I think you have a particular definition of it and maybe we can stop the conversation by sort of defining for the benefit of our audience. What do you mean by cognitive bias and why is it critical for what everything that we do? Sure. So cognitive bias is really just a fancy word for shortcut gone wrong. You have to make something like a trillion decisions every single day. Even right now I’m making decisions about how fast to talk, where to look, what to do with my hands.

Speaker 2 (01:56):

If I thought very carefully about every single one of those decisions, I’d never get anything done. So it’s actually a good thing that our minds are mostly, we live our lives mostly on autopilot. Sometimes that’ll probably, it gets it wrong though. And we call those shortcuts gone wrong cognitive biases. Okay. So, so this is a, this is an inherently a survival instinct or skill, right? This is having cognitive biases allowed us to sort of evolve to who we are today. If we didn’t have cognitive bias would probably be, get eaten up by something. Is that, is that, is that a gross simplification? Well, it’s really that if we didn’t have the shortcut, we would get eaten by something, but the shortcut goes wrong, right? Sometimes it’s harmless, like there’s this great bias called illusion of control. And the way it shows up is if you’re playing a game where you have to roll a die, I mean a high number you’ll hold that. I really hard, but if you need a low number, you’ll roll really soft and that doesn’t make any sense. Like it’s an error in judgment, but it’s a shortcut, right? We like to think we have control and we don’t, it’s a lean body that, but how we roll the die. So it’s really more that the shortcuts themselves are helping us get through the

Speaker 3 (03:00):

Day, but sometimes the shortcuts like, Ooh, oops.

Speaker 2 (03:03):

Okay. So, so what, what would be some sort of so the, the particular biases that the, that the shortcuts you’re talking about, that, you know, that you want to sort of address today and then we want people to pay attention to is specifically what, what are these cognitive biases that you feel you know, get in the way you know, it’s a shortcut gone wrong, right? So what, what would you give us some examples so that, so the viewers understand you know, what you’re particularly focusing on, maybe.

Speaker 3 (03:33):

Sure. So the one that I like that really got me started is this notion of how pattern recognition can sort of lead to unfair hiring practices, for example. So what I mean by that is, let’s say you know, someone is hiring a web developer right in the States and their experience leads them to think, like, if I just say the word web developer to like someone into like a Silicon Valley the image that might pop into their head without even thinking about it might be skinny white guy, right? And when they see a resume, right where the name at the top of the resume doesn’t fit, that they start to give the side eye and it’s not because they actually believe that men are better developers than women or white people are better developed, developed, developing than people of color.

Speaker 3 (04:18):

But the pattern that’s been built up their whole lives through television movies and offices they’ve worked in is, Oh, skinny, white dude equals web developer, web developer equals skinny white dude. Like that’s just the math that their head is doing. And that’s, that’s the shortcut their mind is taking. And that ends up hurting somebody who otherwise might have a good chance of getting a job as a web developer. So those are the kinds of biases that I feel like are harmful. And that’s when we need to be concerned that the shortcuts are actually eventually hurting people.

Speaker 2 (04:46):

And I think you’ve got also the tremendously sort of interesting example in your book about the, the bot the hiring bot Amazon had, or,

Speaker 3 (04:55):

Oh yeah, he was up to speed on that one. Sure. So a few years ago, Amazon created an algorithm, right. That was going to help them speed up the hiring process. It was going to look at resumes for, they came in and sort of give people an idea of which ones to pay attention to. They noticed though that the the algorithm was really just recommending men. Like it was a very sexist algorithm so much so that if a resume had the name of a woman’s college on it, it would demote the resume. And when they tried to figure out why the bot was so sexist they said, okay, well, what did we train down over them on? Right. What data did we give it to start with? And they gave it like the last 10 years of resumes to Amazon and what a lot of those had in common is they were coming from men. And so the AI takes one, look at that and says, gee sure must like dudes. And then just keeps recommending dudes.

Speaker 2 (05:44):

Wow. So this, I mean, this, this particular example sort of brings to mind, I think, you know, one of the, the, the sort of merging tech trends, I guess, I don’t know whether you’d call it a good trend anymore. It’s actually a reality is that, you know, we are letting loose a whole bunch of AI artificial, intelligent agents, right. Across lots of different platforms. Right? So, and, and, and the basis of sort of the accuracy that we assigned to those has to do with the fact that you know, they look at lots of data, so they learn or teach themselves. And, and so if, as you were saying, like Amazon dead, if you actually let them lose on lots of historical data, it could be that if they start to data has to be, you know, he’s biased, for example you know, it is biased against women because women you know, just getting into tech and they’re breaking into STEM. And so they’re obviously going to be underrepresented, especially if you take that example. Similarly, I think African-Americans in certain jobs. I mean, you know, there’s, there was full of biases in some sense. So all of those sorts of historical data, I mean, the AI is going to learn. So essentially this so-called smart thing is actually going to be quite dumb when it comes to things that actually matter from a social impact standpoint,

Speaker 3 (07:00):

Be careful about it’s extremely. And I think it’s more about our misinterpretation of what AI is. Meredith Broussard has written a fantastic book called artificial intelligence. And it does a great job of demystifying AI, because basically AI is a prediction machine. You feed it, a lot of data about what’s happened in the past. And then you ask it based on the data to make predictions about the future. She gives a great example of an exercise. You might do an algorithm. One-On-One where you take data of all the people who died on the Titanic and you take the data of all the passengers, right? And you’re going to create an algorithm. That’s going to predict based on the passenger list, who’s going to die. And it’s looking at factors like, okay, how many people who died were, were, you know sitting in steerage versus headed, had a first-class ticket, right?

Speaker 3 (07:46):

How many rooms were men versus women, blah, blah, blah, blah, blah. And you give it like half the data. So it says, okay, these people had these status and these people died in these people. Didn’t now I’m going to give you some fresh data that I didn’t give you that. And you tell me, you predict which ones died and which ones did. Right. And depending on how good a job it does, you say, Oh, good AI. Right? But that’s it right? If you give it faulty data, or if there’s data, you can’t give it, it’s actually crucial to making the decision. Then it’s going to make faulty predictions. And if you point our myths about AI is that it’s actually this all-knowing like spotlight figure this purely logical Vulcan. You know, that’s going to look at the world and then make this unbiased judgment on the world. When in fact that’s nothing could be further from the truth. It’s going to make predictions based on what you show it. So if you show it a racist, sexist world, it’s going to make racist, sexist predictions.

Speaker 2 (08:35):

And it’s going to do that at speed and at scale,

Speaker 3 (08:38):

And really dangerous about it is that the, the, the value we give it. So I’ll give you another example, the compass algorithm. This was a company that was trying to create software to create a more judging decisions, right? So you might have somebody up for parole or might have somebody you’re deciding what sentencing recommendation to give them. And theoretically, the unbiased algorithm is supposed to look at that person’s record and tell you how likely they are to commit a crime. Again, the problem was for the same record, it was saying that black people were more likely to commit crimes again than white people. And it literally got it wrong. Like there was one guy who was sort of like, right, it said, this person’s gonna commit a crime again, black guy, he didn’t, then the white guy was like, Oh, it’s a low risk.

Speaker 3 (09:28):

And he did. Right. So it was just categorically wrong. But the problem was the survey data that they fed had questions in there that were supposed to predict criminal behavior. And there were questions like does any, is anyone in your family in jail, right. Do you feel safe in your neighborhood? Like all these sort of things that were proxies for race? Right. So so in a way, like it was it was making even more biased and to your point scaling that and what made it so dangerous, isn’t just that it was giving bad advice to judges. It was giving advice to judges that could let the judge wash their hands with the decision and say, well, it’s not me. This computer said, right. That’s the dangerous part is that we give this thing, we allow this thing to take over our judgment, right.

Speaker 3 (10:13):

Because, Hey, who has time to look through all these resumes who has time to look through all these criminal records, let the computer do that. And I’ll just make the final decision. Right. We felt that’s the myth we have. And if we rely that much on AI because we think it’s unbiased, that’s, that’s where the scale comes in. If we have a, if we have a realistic kind of view of AI, like if that Amazon bots job was to detect bias, well, good job. You just detected a very big bias that this company has. Right. but that’s what makes it so dangerous is how we treat the AI, what we think of it.

Speaker 2 (10:46):

Wow. I mean, this is sort of, I mean, it sort of makes your head spin a little bit because, you know, I mean you know, in the tech industry, you know, we all know, you know, the management ethos that all the MBAs are sort of fed is, Hey, don’t rely on instinct and God. I mean, obviously that can have its own cognitive bias, but, but data doesn’t lie. I mean, that’s, you know, like, you know, you hear that like all, all the time, right. Use data, that data drive decisions, right? These are all the, the buzzwords that one hears in sort of the latest management science that goes around. And I mean, you are now clearly pointing to, to something that, you know, yeah. I mean, the data can lie and it can lie at significant scale simply because, I mean, it’s, it’s not really a really sort of intelligence or its own. It’s predictive, as you say. Right. So it’s going to, it’s going to look at stuff that, you know, all our inherent biases and then amplify it 10 times and tell you the same thing, except now with the sort of the value of I’m, right. Because I’m data, right?

Speaker 3 (11:44):

Yeah. Well, the thing that we forget is that data is socially constructed, right? We like to think of data and numbers as being impartial and cold or even computers and acknowledges parcel, but all of it is made by people, right? Someone decides any data set, you see a human being decided what goes in that data set and what doesn’t go in that data set. And if you don’t know what their biases are, you are not going to know what biases inherent in the data.

Speaker 2 (12:11):

Absolutely. So now this concept of cognitive bias, and, and I know that your book deals with this a little bit, but I mean quite a lot, but what, what advice, I mean, do you give in terms of, so is this the book and the stuff you’re talking about, particularly useful for people who, who design or create content, or is this for everybody who makes a product or service or, I mean, does the, this concept of cognitive bias, you know, sort of pervade across lots of things that we do in our everyday lives? I mean, so this is not just about the content. This could be about a handbag design or a cow or a medicine that you bring to market. I mean, am I okay?

Speaker 3 (12:55):

Yeah. Yeah. It’s definitely it’s for everybody. I mean, the response I’ve gotten from people who’ve read the book is that, Oh yeah, I want to show this to my boss. I want to show this to somebody in HR. I want to show this to like, and, and, and, and that, and that’s intentional. Like, I’m very glad to hear that feedback because that’s intentional, the, the, the intro to the book, I sort of define the designer’s job as someone who helps other people make decisions. And at that goes beyond the visual designers or UX designers or content people. Like, I think that almost everybody’s job in some way or another is to help other people make decisions. And the idea is that if you don’t really understand how people make decisions, you’re not going to be very good at your job. So I feel like that that’s, that’s why and the examples in the book aren’t like code, right? Like they’re fairly, I hope fairly relatable examples, no matter what industry you’re in. So it’s really more, you know, it’s called design for cognitive bias, but it’s really just, Hey, here’s how you help people make decisions no matter what your job is. And by the way, now that you know, this you’re kind of responsible for helping you to, to make better decisions.

Speaker 2 (13:59):

I think I’m sure you have tons of questions. I know you’re in a waiting in the wings I want to get in. So yeah.

Speaker 4 (14:05):

That’s all right. Hi David, I was just reading this recent interestingly, a world economic forum. They put out this article like literally a few weeks ago, right? On the three cognitive biases that are perpetuating racism at work. Right. And bear with me on this one. The first one they said was more a licensing. And funnily enough, in a 2010 study, they said you know participants who had voice support for like a us presidential candidate, Barack Obama just before 2008 election were less likely when presented with a hypothetical state of candidates for police force job to select black candidate for the role. And the hypothesis was that presumably was because the act of expressing support for a black president candidate made them feel that they no longer needed to prove their lack of prejudice for that for us please.

Speaker 3 (15:10):

Oh my God. So moral licensing Malcolm Gladwell, I think the very first episode of his podcast actually talks about this. So that was my introduction to it. It’s fascinating. So basically the idea with moral licensing is if society deems some value, right? Like recycling, and there’s an actual experiment on this, like recycling is good, it’s good to be green. Right. I am now going to feel some obligation to look good in the eyes of society. Right. so if that’s my motivation for being green, not, I actually care about the environment, but I care about how people view me. I am going to do the least amount possible to make myself to, to check that box. Right. So there’s an actual experiment where you have people, I think they like fill out a form or something that sort of says like that they’re going to be green or that they are green or some sort of demonstration of their greenness.

Speaker 3 (16:03):

And then you having that room, a recycling bin and a trash bin, and then you have them like throw away that you give them like a bottle or something like a water incidental. It’s like, when they’re finished with it, the people who sort of check off the recycle, I I’m, I’m going to be green box or whatever. Literally that thing in the trash are more likely to check that thing in the trash and the folks who don’t make that commitment. Right. So, or as likely, right. So, so it’s like one of those, like I’m not doing this because I actually care about the environment. I do it because I care about how people view me a high view myself. Another friend of mine, Erica de France, like boils this down. So simply, and she’s like, if you want to predict human behavior, ask yourself what is going to make that person feel good about themselves.

Speaker 3 (16:44):

Right. So if voting for Barack Obama made them feel good about themselves, right? Not because they truly love the black people, or they truly wanted to quality in the world, but they just wanted people to get off their back then any subsequent decisions like, well, I’ve already proved that I’m for black people, I’ve voted for, for, for like, here’s my license, white, literally more licensed. Here’s me. Here’s, here’s my, I love black people. Licensee I’ve voted for Barack. Now I get to say the N word. That was another thing that happens after Obama came to office. People just start and suddenly started being like, Oh, I can use the enrolled all the time. And I was like, no, you can’t solve this with you see a lot of countries that vote in female prime ministers for the first time, right. Turn the right around.

Speaker 3 (17:28):

Right. This happened on Australia turn right around. And all of a sudden, and honestly the proof of the pudding is don’t just look at the role of that minority in public facing situations. Right. Look at the role of that minority in private. So in Australia, when they had their first female prime minister, most women were still like working home were like considered servants in their households. Like that didn’t change even with the same thing. You know, it’s not a coincidence that the black lives matter movement sprung up during the time of Obama is because even with the black presidents white people, white cops were still shooting black people. Right. That didn’t change at all. It might’ve actually increased. Right. So that’s the moral, I suspect that we’re not shocked that that’s what they pointed to. Yeah.

Speaker 2 (18:17):

Yeah. So how, I mean, how does one sort of address cognitive bias then? I mean, everybody has them, right. I mean, it’s not like, you know, nobody can take an holier than thou attitude about that and say, well, I don’t have any cognitive bias. I mean, we, we all have that because it’s part of our evolutionary process and us socialization. So how does one sort of question one would be, how does one sort of mitigate against that and, and to where is cognitive bias actually useful?

Speaker 3 (18:45):

Hmm. Yeah. So, so the, the whole book is really trying to answer that question, right? So like now that we know that we have bias, what do we do? And there’s usually two overarching strategies. One you mitigate by, let’s going back to that example of the the web developer job, right? You mitigate by removing the opportunity or limiting the opportunity for the bias. So anonymized resumes, right? If the name is throwing you off in your decision making, because you’ve got this little voice in the back of your head, I’m just going to take away the name, right. Take away the name, I’m going to take away what college they went to. I’m going to take away maybe what jobs they had, like the companies they worked at, because that might be biasing. I’m just going to show you the qualifications. It’s going to show you the things that are actually going to help you make the decision.

Speaker 3 (19:30):

Right? Cause that’s, my job is to help them make decisions. So if I’m designing something, that’s going to help to make a decision. I need to make sure I’m not showing you things that are going to buy us. Or maybe I need to slow you down. A lot of bias comes from thinking too quickly and we’re used to as designers sticking off, Oh, frictionless experiences, get that thing as fast as possible. It’s like, no, actually sometimes I need to slow you down. So I’m going to introduce design elements or content elements that slow you down. So one way or another, I’m going to use design to mitigate that bias or kind of to your other point, use it for good. Right? So there’s a thing called the framing effect, right? Where the way I describe a situation can influence your decision. Now I can use that to trick you, right?

Speaker 3 (20:11):

That’s what dark patterns are. They’re framing something in a way where you’re going to check a box. You don’t really need to track, or I can use it to actually frame a decision you’re about to make in a more pro-social way. So university of Iowa did a thing where prior evaluation student evaluations of teachers were very biased against women. They simply added a couple of paragraphs to the beginning of the survey, the students Scott saying, Hey, look, these things are usually biased against women. Then make sure you’re making your judgements based on real things, not like appearance or something. And then the people who got those two paragraphs tended to judge less harshly. So that’s the, that’s the sort of mitigating strategy. The other strategy though, is to kind of introduce other perspectives. So if you are the designer creating the design and you’re trying to get your own bias the best way to do it is to introduce other biases, right? Other people who have different perspectives, you can kind of challenge your own.

Speaker 2 (21:09):

So would that be the, I mean, you talk about this concept of red and blue teams, is that, is that right?

Speaker 3 (21:14):

Yeah. So that’s a classic example, right? So red team blue team is an exercise where you have a blue team. Who’s going to, let’s say you’re building an app. And they’re going to do the initial research and maybe get as far as wire frames and some ideas for prototyping. But before they go any further, you’re going to have a red team, red team, red team come in. And for one day, and that red team’s job is to go to war with the blue team and just sort of point out every false assumption or more elegant solution they missed or potential causes of harm. The blue team missed because they were so in love with their first idea. And again, it’s this idea of I’m going to bring in someone else. Who’s not someone who’s unbiased because everybody has bias, right. Has fresh eyes was a different perspective than I do. And together we’ll arrive at a less biased outcome.

Speaker 2 (22:00):

So I mean, just to sort of explore that idea a little bit more in, in, in from a tactical perspective. So somebody’s listening to this may be thinking, okay, so the way to eliminate this is look, if I’m, I want to make sure that I’m not biased towards a gender or sexual orientation or race. So I’m going to essentially create a design team that is quite diverse, like that we have people representative of those sort of criteria. Right. Does that solve the problem or does that introduce a different set of biases?

Speaker 3 (22:30):

Well, I mean, you gotta be careful, right? Because I could, for example, what we’re seeing this right now, right? The Supreme court right in the States right now, we have Ruth Bader Ginsburg Ginsburg rest in power has passed away. She’s very liberal, liberal voice on the court. And one might standing back from that say, Oh, that was a female justice. Let’s make sure to replace her with a female justice, right. That’ll Leo or may make it an equal bias. When I think everyone knows that Trump’s pick for that role is a woman, but as a very different set of standards. Right? So it isn’t simply a matter of quota filling per se. You, you have, you have, you have to look at the whole intersectional person in the whole intersectional set of biases that are being introduced. So I think a really good exercise for this is a, an assumption audit. So it’s where a team at the beginning of a project before they kick it off, they just sit in a room and they lay all the biases out on the table. And it’s like, Hey, I am a black man. I’m American. I have not been to jail. I fairly wealthy I’m English as my first language, but you just lay all these things. I’m I’m hetero on, you know, just lay all these things out there. I’m 46 years old. Like you lay all that out there.

Speaker 2 (23:46):

And everybody kind of just creates that big old pool of intersectionality. And then you start asking questions like, okay, how might these perspectives influence the outcome of this design? And then you ask, well, who’s not here. Right? Anybody here ever been incarcerated? No. Okay. Cause that’s like 2 million Americans, so. Okay. anybody here ever had their immigration status questions? No. Okay. That’s not here. And you get dad on the table and they ask, okay, how has that absence going to influence the outcome? And then you can start having discussions about how do we account for that as we move forward. So it’s, it’s a more, you want to be more intersectional when you think about perspective and you think about like what opinions and biases are here and what, what biases are not here before you kinda jumped to conclusions. I mean, that’s just just a very brilliant observation.

Speaker 2 (24:33):

I mean guidance there. I mean, directionality, I think that’s the real key. I think people think too much in terms of these you know, the outward appearances, demographics sort of concepts, right. When, when in fact you know, you got to think in terms of mindsets, thinking back on these assumptions, I think that’s, that’s the key there. I think that that’s a really good piece of advice. I mean, we’ve, I’ve seen this in sort of when I led design thinking sort of workshops, you know, we, we sort of had died with, we’ve got one, somebody from accounting, somebody from marketing, somebody from sales, you know, mix of him in whatever. In funny me, they would always produce the same thing because what we didn’t take into account, all the companies I was advising was not taking into account. What’s the fact that why do you may have these sort of you know, you know, the persona that you have a diverse team, it’s actually not because in, in sums of thinking and orientation and outlook, they’re all the same. In fact, you know, the clones, the sex and, and other things aside. Yeah. So I think the sexualities of is a good way to crack that nut. I think I know we’ve got a couple of questions also.

Speaker 4 (25:38):

Yeah. We have one question from chaplain. Thank you for joining us today. Moral license was pointing in one, what are the other two points? Okay. Okay. I will bring that up right now. So the other two points were affinity bias and confirmation bias.

Speaker 2 (25:58):

How are they defining affinity buyer bias?

Speaker 4 (26:02):

They’re defining affinity bias as our tendency to get along with others who are like us and to evaluate them more positively than those who are different.

Speaker 2 (26:13):

Yeah. There’s a, there’s a great example of this great, terrible example of this in a hiring practices. So they noticed that when white interviewers were interviewing black applicants, they would sit further away and they would give them less time to answer questions. And then when it was a white applicant, you know, the opposite and this created this terrible cycle where the black African would come in and subconsciously notice all of that and start bombing the interview because they were thinking, Oh, I must be bonding the interview. And they start acting like that. And then the white interviewer would look at that and see them behaving differently and be like, Oh, this person’s

Speaker 3 (26:44):

Bombing the interview. Right. They want to create this. And again, start to giving signals that they were bombing and you see that, that cycle. And then a positive cycle of reinforcement when it was a lighter. And he was like, Oh, he’s sitting closer. He’s giving me more time to answer. This must be going well. Right. So all of those, so yeah, I can totally see where affinity bias would be a huge contributor to some trouble.

Speaker 4 (27:03):

Yes. So confirmation bias is the tendency to seek out favor and use information that confirms what you already believe.

Speaker 3 (27:11):

Yeah. I don’t, I don’t know how it is elsewhere, but I can tell you right now. I mean, the title of this show is pandemic punditry. Like in the States, confirmation bias has taken hold in a big, bad way when it comes to coronavirus, the politicization of masks affinity bias kind of comes into too. But like, like we have the, the news, the information ecosystem we have here is basically rampant, confirmation bias. Like it’s, it’s confirmation bias at scale. Like I could, I, you could write a textbook about the information you could system around Erica. Like, that’s it

Speaker 4 (27:46):

Sorry, I have a follow-up question on the dark patterns that you discussed just a while ago. And it just kind of tributes something in my memory, because I mean, when you read about sociopaths and psychopaths, I don’t know this. So it’s, it could be like a totally stupid question. Right. But are there actually sort of almost dark patterning and using our own cognitive biases against us to believe certain things that they say or want us to do or act in a certain way?

Speaker 3 (28:16):

So, so just to, to clarify, and so I’ll start by saying that. I said, I use the term dark patterns, but we need a better term get into the habit of using deceptive patterns as a less biased term. But to clarify what we’re talking about there. So imagine that there is a like you’re filling out a form and then there’s a part of the form that’s like terms of service, check this to say that you’re sure. And then below that there’s like a capture, like prove you’re not a robot. And then there’s a little thing that’s assigned for our newsletter done in such a way that you think that that checkbox is mandatory. Cause everything around it is mandatory. Okay. That’s a dark pattern. I’m trying to trick you into filling out something really different. Right. So when we’re talking about sociopaths, so it’s interesting.

Speaker 3 (29:07):

I have, I have a second degree of, you know, distance from this. So this is what I’ve called from discussions with my wife. And like I said, I am not an expert, but my understanding is when you’re talking about associate sociopath, I’m about to talk about, are you talking about someone, especially talking about a psychopath, someone who is very good at mimicking human emotion, but does not have the same responses, the same kind of empathy. Like if you saw, if you’re watching a movie and someone like breaks their leg, right. Psychopath traditionally doesn’t really get phased by that. That’s not, they don’t have that same, like, you know so, and as such this veers more to sociopathy, it’s not so much about like some kind of blood loss. Like I got to go, go and kill somebody. It’s more like, you know, I don’t like the way that guy chooses gum, just kill him that way.

Speaker 3 (29:57):

I don’t have to listen to him anymore. Like that is as reasonable a response to, I don’t like the guy doing that gone mass. I’m just going to leave the room like perfectly. It’s really just, which of those is going to be more convenient for me, right. Not, Oh my God, it’s wrong to kill. Right. So all that to say to your point about like, are they manipulating discipline patterns the sort of high level psychopaths and sociopaths, like, are very good at mimicking human emotion to get you to do what they want. Like manipulation is something they’re very good at. And you would have serial killers. Right? If we’re talking about that class of people who generally like there’s a class of serial killer who would kind of have to just break into your home and kill you, there’s another kind that could just sort of kill you at their convenience.

Speaker 3 (30:37):

Right. And that learning in or, or go about their day as if they didn’t have a body in the fridge. Right. Jeffrey Dahmer literally had bodies in his fridge, but we just go about his day. Like everything was funny because in his head it was, this is not a problem. There’s something wrong with me. I get that society doesn’t want me to have a bite in my fridge, but I can behave as if I agree. Yes, that’s terrible. Right. So if that’s what you’re talking about, yeah. There are some psychopaths, associate pastors were very good at manipulating you into thinking that there’s like everything school

Speaker 2 (31:09):

Is it, is it, is it at any point? Okay. I mean, it’s, for example, let’s say that we’ve been tasked for designing something for senior citizens. Right. And so, you know, obviously we will want to get the, the biases or, or the positive biases, I guess. I don’t know what the, how you do a value judgment there, but you want to design with a bias towards a senior citizen. I mean, maybe the letters are bigger. Maybe, you know, the objects themselves are easy to handle it because you don’t, you assume that, you know, dexterity of your hands are less because of age. So those are those cognitive biases or are those just design criteria?

Speaker 3 (31:46):

I mean, it’s a little bit better. I think they’re, they’re definitely designed for drugs. I’m put in mind of the Microsoft’s sort of accessible Xbox like that was born from, I think it was six months, the Cleveland clinics, just like observing people, trying to use the prototype, talking to people and what we did right there I think is something called participatory design. And it’s this idea of, if you are going to design something for somebody, right. Thinking about the people who are going to be most impacted by that design, but also have the least power in the same of how that design goes and then give them as much power as possible. So whether inherently or not, I think Microsoft gave the people who were, I think in real, like, you know, recovering physical therapy and dealing with any variety of disabilities, a lot of power because it wasn’t done until they said it was done.

Speaker 3 (32:37):

Right. that would be the right. You give the decision-making card of the person who’s going to be most impacted by the thing they’re building, which honestly, most cases is not the person who’s going to be impacted. Who has the most power. It’s your boss, it’s your CEO who probably doesn’t have any disabilities. Right. So you think, we think more about, Hey, how can I give that person power? So, and like the, you know, elder care example. Yeah. You would want to give as much decision making power pigs. It sounds like they’re going to be the ones most impacted by this design. You potentially in, in, in how it goes right at the end of the day, are they just going to be handed the thing and say, okay, you gotta use this good luck or, or is it going to be, I don’t want to give it to you till this is done.

Speaker 3 (33:15):

Right. I think those are two very different paradigms, but I think the latter is kind of the one that’s going to be more equitable. So I I’m, so I’m hearing sort of two mitigation criteria that we’ve discussed. One is sort of intersectionality, right? So, you know, using intersectionality aiming towards sense, the other one is participatory design, which also could, you know, make sure that you know, you put positive sort of biases or, or you as you know, get people’s input for us, particularly those who you are going to impact most profoundly into the process. So you sort of design for the yeah.

Speaker 3 (33:55):

It’s, it’s, you know, cause all of this exists in a world of a budget, right? So you do not have infinite time to get every single potential perspective. So I think if you have to, prior to prioritize what new perspectives to introduce you prioritize the, the people are going to be most impacted and have the least power. I think power is the dynamic that you really want to think about when you’re trying to optimize for, you know, how much time or how are we going to spend time in budget on mitigating bias. What’s been time and budget on mitigating biases that are going to hurt the most, like that’s sort we want to prioritize, right? Cause when you do that first exercise where it’s like, who’s not in the room, you’re going to get a very long list. So the next step is going to say, well, this list is actually going to be impacted the most by this design and who on this list in that group has the least power. Okay. Let’s make sure we talk to them. Let’s make sure we’re giving them more power. Great.

Speaker 4 (34:44):

Yeah, I was just wondering Dave, how can we use confirmation bias for good in terms of how do we use it to reinforce what the buyer’s thinking and help them along the buyer’s journey?

Speaker 3 (34:59):

So I think that I have trouble finding a way that confirmation bias is inherently good. Here’s what I mean, the the scientific method was invented a very long time ago to fight confirmation bias. When I thought the scientific method was, is the situation where it’s like, Oh, I have an idea about how the world works. Now I’m going to test that idea. And if I get a good result, I’m going to write down what I did and how much we’re going to try that too. If they get a good result, we’re done, that’s it. Right. Yay. Move on to the next thing. The actual scientific method, at least according to actual scientists, I’ve talked to the corrected me is more like this. I have an idea about how the world works. I’m gonna test it out. If I get a good response, greats, you all try the same thing.

Speaker 3 (35:47):

Good response, great. I get to spend the rest of forever trying to prove myself wrong. Right. I have to ask myself if I’m wrong, what else might be true? And then trying to prove that, and that is a much more rigorous approach, but it was invented specifically to fight confirmation bias. So I feel like it’s not necessarily a good idea to encourage at any point confirmation bias. I think we have to in order to stay true to ourselves and true to the objective reality of the world, or get as close to it as we can and to, to constantly question, it’s kind of our duty and everything, the buyer’s journey that’s one of those places where we have this notion that it is good to rush it. Right. I want to get you to think about Amazon’s buy now buy, right? That is like literally one click. It literally says one click, right? Like it’s not even trying to hide the fact that we don’t want you to think.

Speaker 3 (36:39):

And I, and I think that could be dangerous. Let me give you an alternative approach to that. So Patagonia is a very environmentally conscious brand. And so they don’t want you to ever return anything because if you were turned something that is literally doubling the carbon footprint of that, it’s a black coat, right. So before you hit buy on that coat, they’re actually gonna put up roadblocks, they’re gonna have big wall content experiences that really want you to adjourn in this coat. Make sure you’re down with what its messages. Then they’re going to have a slowed down by flow of it’s going to keep kind of being like, are you sure? All right, let me show you the size options still. Sure. Okay. Let me show you this. You still, all right. All right. Okay. Let’s do this. Right. rather than like, you were never going to find a one click button on Patagonia right now, part of that is their brand.

Speaker 3 (37:30):

But part of that is their mission. Like they, they need, they only higher value than just to their shareholders. They hope they will value. They feel to the environment. And so they’re going to conduct a business. Yes, they’re still a business. They’re not a nonprofit. They are going to make money and they do those aren’t cheap coats, but but they’re going to do it in a way that they feel aligned with their values. And I think that actually is, is a good way to think about e-commerce in particular and this is a bigger discussion, but it’s to what science and Margaret Lucy about this yesterday. It is good to be very clear about not just your brand in terms of, okay, this is, we sell coats. But in terms of brand, in terms of your values, I think that free long time we’ve said, here’s the business discussion, here’s the ethics discussion and never the Twain shall meet or, or you have to balance the two somehow.

Speaker 3 (38:21):

And I’m like, if your business model like has to somehow get on a scale, right. Because it’s somehow not inherently ethical. Okay. We’ve already got a problem. Like those should be the same. Like we shouldn’t be, you know? So I feel like that is that’s often a way of saying that I don’t, I wouldn’t, I wouldn’t want to use confirmation bias to encourage the buyer flow. I would want to use actually the opposite to reinforce the buyer flows, but by the time the person actually makes the decision, they are sure, because they’ve really tested all the alternative theories and that I think makes them more loyal customer is way more valuable to you. And so when you’re kind of clicking too fast

Speaker 2 (39:04):

And I’m sure from Patagonia, it also works in favor of the number of returns, which is, you know, in retail, that’s a killer, a hit to the bottom line. Right. So is that, is the thing that people don’t talk, talk to you about in, in retail, especially online? Yeah, so I think, yeah, I mean, it’s, that’s actually smart business in addition to being sort of sustainable for the, from a planetary perspective. And I know I’m a big fan of them, so, but yeah, I think that’s, that’s definitely I mean it makes good business sense and I think I, to your point, I think you know, it’s amazing that people don’t bring ethics and transaction all, all under the same sort of point. I mean, you know, it could make very good business sense like this case does. And, and we need to probably start looking like that, looking at that stuff more closely, given the pandemic that we are all going through. Right. So, yeah. Amazing. I think. Yeah.

Speaker 3 (39:58):

Dave about choice architecture and why we should care about it. Oh, absolutely. So choice architecture is a concept that I believe failer came up with in, in, in his book knowledge with castle esteem. And especially this idea that we architect, you know, the way that a particular environment is, is architected waste created the way it’s designed influences decision making. All right. So they talk about this debate over school lunches and cafeterias, and where do you put the healthy food and reap put the non-healthy food and you can structure literally where you put the food in a cafeteria based on any number of factors you can do it from most expensive, least expensive, healthiest to least healthiest there’s any number of ways to, and yeah, it turns out if you sort of make the healthier food, easier to reach essentially more prominence, it tends to move better.

Speaker 3 (40:48):

Right. And we’ve actually known this in retail for a long time. You’ve got something you want to sell. There’s certain places you can put it in the store that I make it, those end caps, baby they’ll move faster. Right. so and, and the question is, do you do that? Like, it’s the why behind that design? It’s like, do you put the stuff on the end cap that is, you know, that you’re trying to move because it’s getting old product. Do you move the stuff to the end cap that has a higher profit margin, right? Or do you see the stuff cap that’s actually healthier and better for people, right? Whatever choice you make, that architecture is going to influence the behavior of your customers. So that’s the idea behind choice architecture. And it’s kind of the core idea of the book is that your design decisions will inflect influence people’s behavior probably in ways they don’t even realize.

Speaker 3 (41:34):

And I bring this up a lot because people sort of ask, well, why, why even like, it’s, it’s too dangerous, it’s you, you’re manipulating people by using any of these techniques. Like, so let’s not even, let’s just have a neutral design and it’s like, that’s not a thing I could randomly assemble. Right. Even the objects behind me, like I did not, I didn’t actually arrange any of that. And I liked that. Right. but I don’t know that she had any particular agenda in mind, but if you look at that, your mind will start to tell a story about those objects. And if I change the order of the objects, again, even randomly, your mind will tell a different story about that because we are pattern making machines. That’s just how we live. We can’t help ourselves. So you can’t actually introduce a design that isn’t going to influence behavior. So at least try to make it cause you don’t have neutral just as an option.

Speaker 2 (42:24):

So does it does actually neutrality exist. It doesn’t right. I mean, theoretically speaking, we all have our cognitive biases. Right. So we, we just, I mean, it’s, it’s, it’s one of those things it’s, it’s it’s something that we have to mitigate against using some of the techniques that you discussed. What, what has been, sort of has, has the the problems that are going on or the the stuff that we’re seeing on in the U S in terms of black lives matter and this you know, refocus on systemic bias racial bias in this case, has that have impact on the book? I mean, in terms of has there been a positive impact? I guess when people saying, Hey, look, I need to start paying attention and, you know, we need to read what Dave’s got to say.

Speaker 3 (43:05):

Yeah. I mean to be perfectly Frank, you know, as this year has born out, we the, the book came in on August, late August and we could see the writing on the wall. We tried, they basically got the, the engine would be a little faster to get out a little sooner because it was clearly relevant, more relevant than we expected it. Yeah. Talking about this stuff, you know, forever. I give a talk related to this book called design called design triangle bodies. And the first time I gave it was in 2017 at UX Copenhagen. And even from the very first time I gave it, there was a black lives matter slide in there. And it was in there because I was making a point about a bias called WLC on professor now, which is basically the bias where you see the whole world through the lens of your job.

Speaker 3 (43:50):

And I told a story about how chief Ramsey of the Philadelphia police departments maybe 10 years ago asked every one of us police officers, what do you think your job is? And they would say something like to enforce the law. And he would say, okay, well, what if I told you your job is to protect civil rights, right? That’s a much bigger job than enforcing law encompasses that, but you also have to treat people with dignity. And I bring up that, I brought that story then, and there was literally a black lives matter, slide up. Right. I brought that story then to, to make the point that, you know how you define your job is a matter of life of death. And I kind of extend that to us as designers, like our jobs are harder than we think to, right.

Speaker 3 (44:30):

And we can’t them in a way that simply design cool stuff. We need to define them in a way that lets us be more human. So each other, right. The same way that cops do defined their job in a way that lets them be more human to us. And obviously like I’m still giving that talk and that slightest launder and that paragraph is still in the book. Like I talk about this in the book too, and I get so much more emotional, obviously like this is so in our face now and obvious it’s always been going on for it’s even more, you know, no piercing now, but but I left it in there because it’s, it’s even more I’m sure now, or it’s even more obvious now how important that is. So yeah, this is all extremely relevant. And I do think that it’s, you know, hopefully it’s giving people something to think about in a way to contextualize the black lives matter movements to beyond.

Speaker 3 (45:18):

Okay, this isn’t just about defining the police. It’s about rethinking how we treat each other at the interpersonal level and at the systemic level too. Right. Cause that’s the other big conversation that’s happening now? Not really so much 10 years ago of, Oh, what is it just a few bad eggs we need to rethink the entire police department. We need to rethink the electoral college. We need to rethink how the entire, you know, criminal justice, like all of these conversations are happening now. Talking about the system, as opposed to talking about this one wall or this one policy.

Speaker 2 (45:53):

And I think, you know, the thing that we started with, I mean, it’s still, you know, giving me some sort of palpitation or pause, you know, this whole concept of system, right? So most of our systems are going the way of AI. I mean, whether we like it or not, it’s happening, right. And even in the span, the middle of this pandemic, even the drug research that we’re doing, I mean, it’s a lot of AI being used across the board, right. I mean, because we wanted it to do it faster, better, quicker. And so we are unleashing a lot of sort of systems on them, artificial intelligence, machine learning. So some of these patterns stuff gets done. So if the testing was done mostly on white males and not on Indian and Chinese, right, you’re gonna have that bias, you know, from a genetic perspective.

Speaker 2 (46:35):

I mean, there’s a lot of that stuff also now in built into these, you know, development of vaccines of drugs and we’ve sort of unleashed it. So the system problem is, you know, compounded by the fact that the systems themselves have gotten a lot sort of predictable faster in some sense. And therefore these biases are getting accentuated quite rapidly. So I think it’s, I mean, the book is really timely because it does sort of give us people who are in the tech area who are in even in marketing to sort of set a step back and say, Hey, you know, we, we need to sort of maybe slow down, I’ll look at these things that we design be it, you know, FMCG product or or, or a computer system or a marketing campaign or content to sort of be sort of doing this with these principles in mind that we are, we, are we actually understanding there could be cognitive bias

Speaker 3 (47:30):

In there. I think that’s, that’s really what, what I’m hearing today. Yeah. And it has to be in the budget. Right. Because I think a lot of what’s motivating the wash right. Is, you know, capitalist forces where it’s like, I get that, but we’re going to make more profit faster if I don’t stop and doing assumption on it. Right. or, you know the default is white males and that’s kind of who I’m marketing to. So what if we just right, or basically there’s, there’s not as much, you know, I’m making a moral arguments and even honestly, business argument for stealing your customers down. But again, the bias is toward, well, it’s worked for us so far, really fast. We’ll just keep going fast. Right. Like I’m not singing it to my bottom line. So maybe we just keep going fast. Right. and I think that’s like, that’s why I say when I, when I, you know, give, give, give these talks are what talking about the book.

Speaker 3 (48:22):

I say, my goal is to change hearts and minds in budgets, because if it isn’t under budget, it’s not actually happening. It doesn’t really into this. Right. And so I usually encourage people to start small with something like that. Assumption, audit, maybe a one, two hour meeting. And so I can go to my boss and say, Hey, can I just get two hours on the budget? Right. It’s a 60 day project. I just think two hours out of our whole 60 days to make it a little less likely we’re going to make something harmful. Right. And if that works, maybe on the next project, I can be like, Hey, remember, well that assumption audit, wind, okay. This one day for a red team to come in just one day, it’s all I know. It’s more, it’s not that much. It’s a 90 day project. One day you still have 89 days to do, anyone can do that one day. Right. but you see that, right. Almost like a virus inside the company to the point where all of a sudden it’s like, Oh yeah, of course you can have two weeks. I’ve already posted design company. I’ll go for it.

Speaker 5 (49:17):

We have questions.

Speaker 4 (49:18):

Yeah. Actually we have Frank, Spillers joining us. He says, love the message design with, and for the customer, for customer values, incidentally, we were actually talking to Frank next week. So it’s great. He joined us. And we have a, Rittal asking another question if we all have cognitive bias and technology’s not neutral, what’s the good news.

Speaker 3 (49:42):

Oh, well, the good news is we have had methods for making better decisions for literally thousands of years, the study of ethics, which is really just the study of how do you ask questions around really 40 fuzzy non-hard science rights issues has been with us for literally thousands of years. We just never paid them very well. So when we say, okay, now we need to set up a method. So red team, blue team, for example, like that, isn’t something I made up like two weeks ago. That’s been with us ever since we’ve had the military and journalists they’ve been using it for years. Cyber security has been using it for a very long time. The idea of quality assurance. Right. So QA has been with us in tech for very long time. Right. And no one would dream of watching a website without having to wait for a right.

Speaker 3 (50:33):

Crazy. Talk to me about is basically an ethical view. Right. And sort of saying, okay, the decisions you made to arrive at this design on a quality check, that approach, like does that actually make sense? They knew a conduct or a great article for the Harvard business review years ago where he sort of took the ideas from thinking fast and slow, which is foundation of work. You’re, you’ve got to read it and a huge influence on my book. And he kind of applied them to the decision making progress process at an organization, which is super biased and sort of saying, you’ve got to apply those same principles to sort of slow down that thinking. So the ideas and talking about all in terms of how to approach this stuff, it’s just a question of, are you willing to put it in your budget?

Speaker 3 (51:15):

Right? Are you willing to accommodate? We do the same thing with accessibility. We started out with we talking about not everybody can see all colors all the time, but there’s a thing. Hold on. Do I care? Why do I care? Why should I care about this? But then I’m trying to ship something right to, Oh yeah, it’s the law. And you know, my company for one, like our statements of work when we do work for you, like says AA and it, like, we will have people sort of try to like send us RFPs that are like, Oh, we only need you know AAA compliance or something like that really are like lower compliance. And I’m like, that’s nice, but we’re not going to do anything less compliant with the light. So that’s how far we’ve come. Right. And it’s 20 years or so. Right. I’m not saying this is going to happen overnight, but the sooner, the better,

Speaker 4 (52:03):

Sorry, I just have a follow-up question. Obviously this pandemic has affected how we as human beings interact socially. Do you think it is having a longterm effect on user experiences?

Speaker 3 (52:20):

Oh my God. Yes. And I can’t even begin to guess what that will be like. It is, it isn’t the nature of the one thing that seemed to certain toward the beginning of this. And it’s funny, my wife, so quite a pediatric neuropsychologist. So she is much more attuned with how health, where we work. And back in March, she was like, you know, Dave, sit down, this is going to be at least 18 months. And this is back in March. Everyone was like, okay, we’ll be fine by the summer. Right. Even maybe if at the latest. Right. And she was like the 18 months. And I’m like, Oh, she’s, you know, at this point 18 months is like the best case scenario.

Speaker 3 (53:04):

Thinking about that at that scale, when I realize I’m living through something at that scale, I thought back to 1918, if I back to the black plague, if I back to basically this idea that there was no world changing events, right. Nothing that affects this much of the globe at the same time, in a similar way that doesn’t fundamentally change something about the world. Right. You get the industrial revolution and related in some ways to the black plague, you get there’s this very little we of a whole bunch of changes coming out of the Spanish flu, like just fun little shifts happen when stuff like this. And it was sort of like one of those, I know something fundamental is going to change this, what that is. I’m not sure, but it’s going to be something that you’re not going to go back to normal.

Speaker 3 (53:48):

And what’s interesting about that moment, as you see now, people trying to at least gain that because there’s a lot of people for whom normal wasn’t working. So it’s like, okay, maybe with this much uncertainty, maybe it’s possible to get that systemic change. Right. Like a systemic change is hard. It’s rare and it’s hard. So, but it’s most likely to happen when there are world changing events. So I honestly, so yes, I absolutely think it’s going to change UX. I have absolutely no idea how right. It’s just, it’s just too chaotic, even pretty personal to begin to guess.

Speaker 2 (54:32):

Yeah. But change is coming. I mean yeah,

Speaker 3 (54:36):

Good change. Right. Like, and I think that’s why like everyone is trying to do, I mean, I’ll be, I’ll be honest. Like to me, I feel a duty to do something, especially here and especially now to introduce something good into the world. And I honestly hope that’s what the book does. Like I thought about like again, she writes about like, what can you do? What is the thing that you can do right now? There’s a lot of like table stakes, like getting up to vote and all that stuff. What can you personally do? What, what is your gift that you can bring to this moment? And for me, that’s why I hope this book is like my way of trying to say, okay, I’m really going to get in talks and really good at writing. I’ve almost by accident, studied the hell out of cognitive bias. And I’ve got this background in UX. Okay. Let’s try this. Well

Speaker 2 (55:23):

Any more questions?

Speaker 2 (55:34):

So Dave, you know, I mean, it’s, it’s been, I mean, I’ve, I’ve had lots of people prior to this when we, when we first let people know that you’re coming on the show, there’s lots of interest by people who I normally didn’t think would be interested in the subject. Right. So everybody’s like, Whoa. And it may be it’s, it’s a function of the pandemic. Right. And people say, you know, I’m more aware of this concept. I mean, there’s, you know, concept of fake news you know, people sort of believing things that are hard to believe you know, there’s, you know, create evidence of certain things, but people still disbelieve that, you know, clearly systemic problems that are sort of kind of coming apart and the lights being shown on them globally across different countries. And I think it’s sort of exposed this whole concept of, you know, that we have biases and there have been systemic and those biases are actually, you know, significantly hurting some of us greater than others.

Speaker 2 (56:27):

And that could be insidious enough. That could be in everything we do. And it’s clearly the premise of your book. So this has been a fascinating conversation. I wish we had more time to sort of rally into more, more depth, but I know Catholic has read a few chapters of the book I’m going to purchase mine. In addition to sort of the research I’ve done behind you, it seems like a really good book to have handy and read, read through. If I hope I encourage our viewers to do the same. Thank you again for sort of taking the time out from from your hectic schedule to kind of join us today on pandemic punditry traveling. Yes. It’s, it’s been, it’s been fascinating and I, you know, I’ve, I’ve come out a bit more educated than I was when I went in and frankly, Coptic and I started this in April.

Speaker 2 (57:08):

I think we are close to our 50th episode now. And, you know, every, every twice a week, at least we spend an hour with, with someone fabulous from across the world. And for those two hours, at least, you know, we’ve we field we’ve filled with hope and spirit. And I think in a pandemic time like this, that’s the best we can ask for, right? So even if it’s only two hours a week where we get to interface with amazing people all across the world, and they just help us sort of inject hope into our lives, I think then we will achieve what we meant to achieve by starting this podcast. So, so thank you very much for gracing our, our show and all the very best with your book. And we’ve I think we’ve we’ll put the links to, for people to buy it as well. And again, thanks again for joining us. Dave, have a rest of have a great weekend and a good upcoming week, four years ago, which Microsoft put out that really reinforced this bias within 24 hours. Awesome. Take care. Thanks again.

Add comment